Fix INP With an AI Agent: The Metric Lab Tools Cannot Measure

INP cannot be simulated. This is the field-data-connected workflow for diagnosing and fixing Interaction to Next Paint with an AI agent.

Interaction to Next Paint is the hardest Core Web Vital for AI agents. It cannot be simulated. Lighthouse has no INP score. An AI agent without real user data cannot tell you which interaction is slow, who experiences it, or when in the page lifecycle it happens. This is how you fix INP when you have field data.

Last reviewed by Arjen Karel on March 2026

Why INP is different for AI agents

INP measures how fast your site responds to user interactions: clicks, taps, key presses. It picks the single worst interaction from the entire session. The key word is real. A user clicks a filter dropdown on your product page while a third-party analytics script is executing. That 380ms delay becomes the INP for that session.

No lab tool can reproduce this. Lighthouse uses Total Blocking Time as a proxy, but TBT measures main thread blocking during page load. INP measures response time to interactions that happen at unpredictable moments throughout the session. A page with zero TBT can still have terrible INP if a background timer fires at the wrong moment. An agent without field data is blind to this. It optimizes TBT and declares victory while real users wait 400ms for their clicks to register.

Three phases, three different fixes

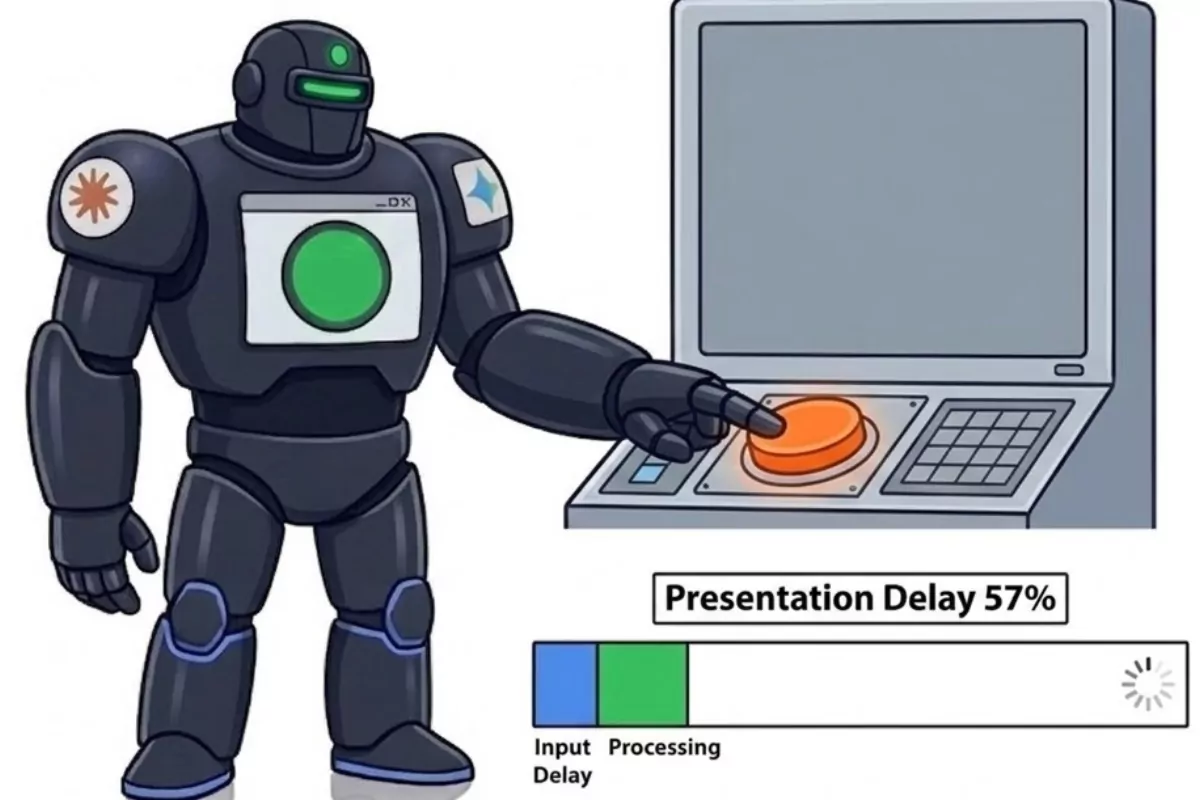

INP breaks down into three phases. Each one means a different fix.

Input Delay: The main thread was busy when the user interacted. During loading state, the usual causes are large JavaScript bundles executing, analytics scripts initializing, or framework hydration. At complete state (page fully loaded), blame background timers, third-party scripts polling for updates, or service worker activity.

Processing: The event handler itself is too slow. Layout thrashing (read DOM, write DOM, read DOM in a loop), synchronous DOM queries inside the handler, expensive re-renders, or simply doing too much work in a single task without yielding.

Presentation: The browser takes too long to paint after the handler finishes. Large DOM trees (thousands of nodes that need style recalculation), missing CSS containment, or expensive paint operations triggered by the handler's DOM changes.

Which script is blocking your page

CoreDash captures Long Animation Frames (LoAF) attribution from real user sessions. This is what lets the agent actually fix INP instead of guessing.

LoAF names the exact JavaScript file, the function, and the duration. The agent does not guess which script is blocking the main thread. CoreDash tells it: gtm-container.js blocked the main thread for 280ms during the interaction on the checkout page filter.

Instead of "your page is slow" you get "this file, this function, this duration." Compare that to Lighthouse, which tells you Total Blocking Time is 450ms and leaves you to figure out which of your 30 scripts caused it.

The agent opens the file, reads the code, and writes the fix: defer it, break it into smaller tasks, or rip it out if nobody needs it.

Loading vs loaded: two different problems

Whether the interaction happened during loading or complete state changes the fix entirely.

If INP is bad only during loading state, the problem is script loading order. Too much JavaScript executing before the page is interactive. The fix is in script deferral: defer non-critical scripts, code-split, reduce parser-blocking JavaScript.

If INP is bad at complete state, you have a runtime JavaScript problem. Something is running in the background on a fully loaded page. Third-party scripts polling for updates, analytics sending beacons, or your own code running expensive operations on a timer.

CoreDash tracks the page load state for every INP measurement. CWV Superpowers uses this to rule out half the causes before looking at scripts.

Proportional reasoning for INP

INP is 350ms on the checkout page. The phase breakdown from field data:

- Input Delay: 70ms (20%)

- Processing: 80ms (23%)

- Presentation: 200ms (57%)

Presentation is the bottleneck. 200ms does not sound alarming in isolation. But it is 57% of the total. Fixing Presentation moves the needle more than optimizing Input Delay or Processing combined.

Without the percentages, an agent chases Input Delay because 70ms exceeds some threshold. Show it the breakdown and it goes straight for the 57%. The result: one fix that drops INP below 200ms versus three scattered fixes that barely move it.

Typical fixes by phase

Input Delay during loading: Defer non-critical scripts. Remove unused JavaScript. Code-split so only the code for the current page loads.

Input Delay at complete: Audit third-party scripts running after page load. Use the LOAF data from CoreDash to find the offending script. Defer it to idle time with requestIdleCallback.

Processing: Yield to the main thread with scheduler.yield() or setTimeout(0). Split long event handlers into smaller tasks. Avoid forced layouts (reading layout properties immediately after writing to the DOM).

Presentation: Use content-visibility: auto for large DOM sections below the fold (supported in all major browsers since September 2025). Reduce the number of DOM nodes affected by the handler's changes. Use CSS containment to isolate the repaint to a smaller area.

Verifying with field data

INP improvements show up in CoreDash within a day or two of real traffic. Query the same page and device segment. The p75 should drop and the phase distribution should shift.

Watch the load state split too. If your fix targeted loading-state INP, confirm that the loading-state numbers improved without regressing complete-state numbers. Field data gives you this granularity. Lab data does not.

Performance degrades the moment you stop watching.

I set up the monitoring, the budgets, and the processes. That is the difference between a fix and a solution.

Let's talk