CWV Superpowers: Automated Core Web Vitals Diagnosis

A free Claude Code skill that connects to your CoreDash field data and Chrome to diagnose LCP, INP, and CLS issues and generate code fixes.

CWV Superpowers is a free, open-source Claude Code skill that connects to your CoreDash field data and Chrome browser tools to diagnose Core Web Vitals issues. It identifies your worst bottleneck across real user data, traces the root cause, and generates the code fix. Not a list of Lighthouse suggestions. The element, the file, the line of code.

Last reviewed by Arjen Karel on March 2026

Quickstart:

The code is on GitHub. Install it in Claude Code with two commands:

/* Install the MarketPlace and the CWV SuperPower Skill */

/plugin marketplace add corewebvitals/cwv-superpowers

/plugin install cwv-superpowers@cwv-superpower

/* now start the Core Web Vitals AI superpower*/

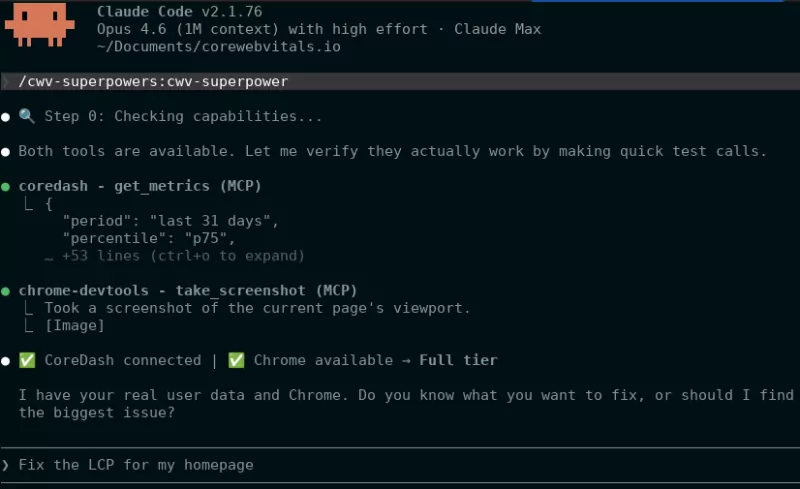

/cwv-superpower Then add CoreDash as an MCP server and run claude --chrome. That is it.

What the CWV SuperPower Skill does

CWV Superpowers combines two data sources that together answer both what is slow and why.

CoreDash Real User Monitoring tells you what is actually slow. Real users, real devices, real networks. CoreDash tracks every page load and attributes every metric to the exact element causing the issue. When it says your LCP is 4.2 seconds and the bottleneck element is div.hero > img.main, that is what your actual users experience.

Chrome browser tracing tells you why. The skill visits the page with mobile emulation (4g, 4x CPU throttling), records the network waterfall, and traces the exact phases that RUM data identified. Not everything, only the part that has been proven to make your pages slow!

That is the trick! RUM data tells you what is slow but not why. A Chrome trace gives you the why, but without field data you are investigating the wrong page or the wrong metric. CWV Superpowers combines both.

It diagnoses all three Core Web Vitals: LCP (four-phase breakdown), INP (three-phase breakdown with script attribution), and CLS (pattern matching against known causes). For the full metric-specific workflows, see the LCP diagnosis guide and INP diagnosis guide.

The 5-step workflow

Every investigation follows five steps.

Step 1: Intent. You tell it what to look at ("LCP on my product pages") or ask it to find the problem ("What should I fix first?"). If you name something specific, it clarifies the scope. If you want discovery, it moves to Step 2.

Step 2: Discovery. The skill scans your site through CoreDash. It pulls overall health, mobile health, the worst URLs by LCP, and the worst by INP. Then it picks the biggest problem: poor ratings over needs improvement, mobile over desktop, higher traffic volume. A page with a p75 LCP of 2.4 seconds that "passes" but has 18% of users in poor territory is still a problem. CWV Superpowers catches that.

Step 3: Diagnosis. For LCP, it makes 5 to 7 CoreDash API calls: the LCP element, the element type, the priority state, the four-phase breakdown (TTFB, Load Delay, Load Time, Render Delay), and the 7-day trend. It identifies the bottleneck using proportional reasoning: the phase consuming the largest share of total time, not the phase exceeding an absolute threshold. For INP, it pulls the slow interaction element, the LOAF scripts, and the three-phase breakdown. For CLS, it matches against five known cause patterns.

Step 4: Chrome trace. If Chrome is available, the skill visits the page and traces only the bottleneck phase from Step 3. A targeted trace generates evidence. A full trace of everything generates noise.

Step 5: Output. Apply the code fix, generate an HTML report, or both. The fix names the file, the line, the element, and shows before and after.

What the output looks like

The skill generates a root cause statement that connects field data, Chrome evidence, and the specific fix:

Root cause: The LCP image div.hero-banner > img.product-main on /product/running-shoes-42 is discovered 1,980ms late because it lacks a preload hint and has no fetchpriority="high". CoreDash data: LCP is 3,820ms (poor) on mobile, p75. Load Delay is the bottleneck at 52% of total. Chrome trace confirms: 1,940ms gap between HTML first byte and image request in the network waterfall.

The fix follows directly: add a preload hint, set fetchpriority="high". Specific to the element, the file, and the cause. Not generic advice.

Full reports include metrics cards with color-coded ratings, phase breakdown charts, and when Chrome was used, filmstrip screenshots and a network waterfall SVG. Both report types are self-contained HTML files you can drop in a Slack thread or attach to a ticket.

Tip: Chrome is optional. Without it you still get full RUM diagnosis, bottleneck identification, and code fixes. You lose the filmstrip and waterfall visuals, but the diagnosis quality is the same because real user data is the primary source of truth.

Getting started

You need a CoreDash account with real user data flowing. The free tier works. Get an API key from Project Settings > API Keys (MCP). The key is shown once and stored as a SHA-256 hash. Read-only access.

Claude Code (recommended)

# Add CoreDash MCP server

claude mcp add --transport http coredash https://app.coredash.app/api/mcp \

--header "Authorization: Bearer cdk_YOUR_API_KEY"

# Add the skill from the marketplace

/plugin marketplace add corewebvitals/cwv-superpowers

/plugin install cwv-superpowers@cwv-superpower

# Start with Chrome for full analysis

claude --chrome Type /mcp to verify CoreDash is connected. Then ask: "What are my Core Web Vitals?" If it returns your real LCP, INP, and CLS data, you are ready.

Cursor

Add CoreDash to .cursor/mcp.json:

{

"mcpServers": {

"coredash": {

"url": "https://app.coredash.app/api/mcp",

"headers": {

"Authorization": "Bearer cdk_YOUR_API_KEY"

}

}

}

} Other MCP clients

The CoreDash MCP server works with any client that supports HTTP MCP: VS Code (Copilot agent mode), Windsurf, Gemini CLI, Cline, and JetBrains IDEs. The endpoint is https://app.coredash.app/api/mcp with a Bearer token header. See the setup guide on GitHub for per-client configuration.

For background on why field data matters for AI agent Core Web Vitals work and why Lighthouse-only agents produce fixes that get rejected a third of the time, read the overview article.

Performance degrades the moment you stop watching.

I set up the monitoring, the budgets, and the processes. That is the difference between a fix and a solution.

Let's talk