Core Web Vitals and SEO: Do They Affect Google Rankings?

How much do Core Web Vitals actually affect Google rankings? I fixed CWV on hundreds of sites. Here is what the data shows.

This guide is part of our Core Web Vitals resource center. It covers everything I know about the relationship between Core Web Vitals and search engine rankings, based on 17 years of web performance consultancy and field data from hundreds of enterprise sites monitored with CoreDash.

Core Web Vitals and SEO

"We fixed our Core Web Vitals but our rankings didn't improve. What went wrong?"

I hear this almost every week. The answer is usually the same. Core Web Vitals is a confirmed Google ranking factor. But it works differently than most SEO guides suggest.

It is not a growth lever. It is a gate. Severe failures hurt you. Passing "good" removes the penalty. Going beyond "good" does nothing for SEO. A Lighthouse score of 100 will not outrank a Lighthouse score of 72 if the field data is the same.

I have been fixing Core Web Vitals for 17 years across hundreds of enterprise sites. I built my own Real User Monitoring platform (CoreDash) specifically because the existing tools did not give me the attribution data I needed. This page is what I know about how CWV actually affects search rankings. Not what Google's marketing says. Not what SEO blogs repeat. What I see in the field data.

Table of Contents!

- Core Web Vitals and SEO

- Do Core Web Vitals Affect Google Rankings?

- How Google Uses Your CWV Data

- The Three Metrics and Their SEO Weight

- What the Data Shows: Studies and Real Results

- What Does NOT Affect Your SEO

- How to Check If CWV Is Hurting Your Rankings

- Fix CWV for SEO: Where to Start

- FAQ: Core Web Vitals and SEO Questions Answered

Do Core Web Vitals Affect Google Rankings?

Yes. Google confirmed this explicitly.

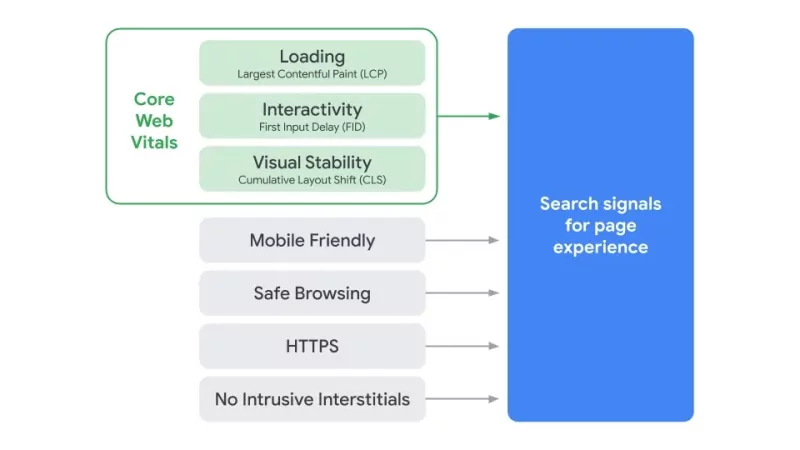

On March 12, 2024, Google updated their official documentation to state that Core Web Vitals are used by their ranking systems. This was not new behavior. The Page Experience rollout started in June 2021 for mobile and completed for desktop in March 2022. But the 2024 documentation update removed any remaining ambiguity.

Here is what Google actually said, in order of importance.

The official documentation (developers.google.com, March 2024): Core Web Vitals are used by Google's ranking systems. Not a separate "Page Experience system." Part of the core ranking infrastructure.

Danny Sullivan (Google Search Liaison, April 2023): Page experience is not a standalone ranking system. It is a set of signals used by multiple core ranking systems. This matters because it means CWV data feeds into the same systems that evaluate content quality, links, and relevance.

John Mueller (Google Search Advocate, 2024): Core Web Vitals are "not giant factors in ranking." He described them as more than a tiebreaker but less important than content relevance.

Mueller again (Reddit, 2021): "It's more than a tie-breaker, but it doesn't replace relevance of the content. Depending on the sites, there might be situations where it is more of a factor, and situations where it is less. As an SEO, I think it's worth working on, but I wouldn't drop everything else to focus on it."

That last quote is the most honest summary of CWV and SEO I have seen from anyone at Google. I agree with it completely.

Key takeaway: CWV is a confirmed ranking signal that feeds into Google's core ranking systems. It is not a standalone system. Its weight is small compared to content relevance and links, but it is real and measurable.

The "Tiebreaker" Framing Is Wrong

The SEO community latched onto the word "tiebreaker" in 2021 and never let go. Most articles still describe CWV as a tiebreaker. This is wrong.

Google corrected this. John Mueller and Gary Illyes both stated CWV is more than a tiebreaker. But the correction matters less than the mechanism. CWV is not a simple switch that breaks ties between two equally relevant pages. It is a signal that feeds into multiple ranking systems and gets weighted alongside hundreds of other signals. The weight is small. But it is real.

Think of it this way. If two pages have identical content quality, identical backlink profiles, and identical relevance to a query, the page with better CWV data will rank higher. But that scenario almost never exists in practice. What actually happens is that CWV contributes a small positive or negative signal that compounds with everything else Google evaluates.

How Google Uses Your CWV Data

This is where most SEO guides fail. They tell you to "improve your Core Web Vitals" without explaining what data Google actually looks at. The answer is specific and important.

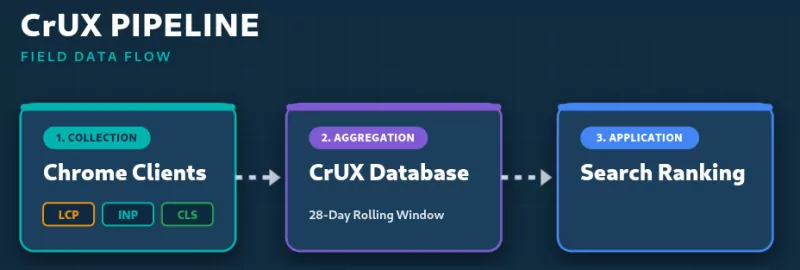

Google uses Chrome User Experience Report (CrUX) field data. Nothing else.

Not Lighthouse scores. Not PageSpeed Insights scores. Not any third party monitoring tool's numbers. CrUX field data from real Chrome users visiting your site.

The 28 Day Sliding Window

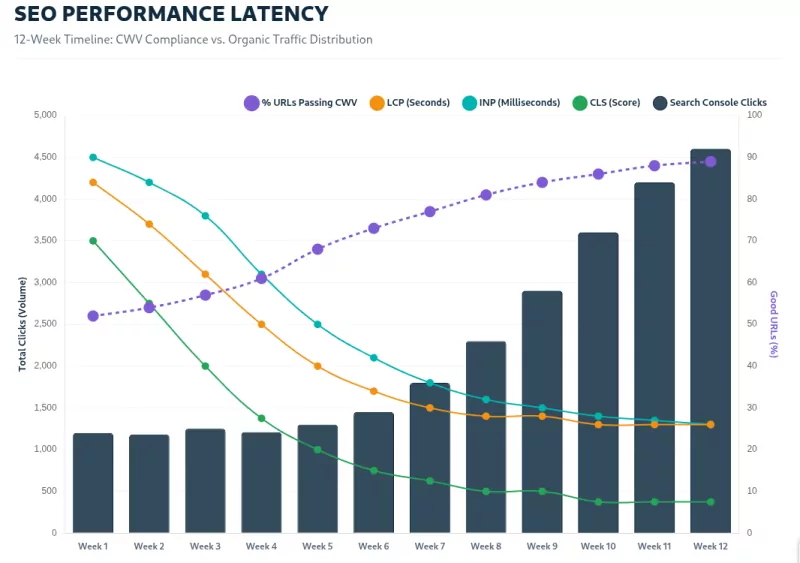

CrUX collects data over a rolling 28 day period. When you fix a performance issue today, it takes roughly 28 days before Google's data fully reflects the improvement. I see this consistently across client sites monitored with CoreDash. The RUM data shows the fix immediately. CrUX catches up weeks later. Search Console catches up after that.

Only Chrome Users Count

Safari users generate zero CWV data for Google. Firefox users generate zero. This means iPhone users are completely invisible to Google's CWV evaluation. On sites where 40% to 60% of traffic comes from iOS (common in the US and Western Europe), Google only sees CWV data from the remaining Chrome users. This creates a blind spot that most SEOs do not account for.

Chrome Users Must Have Reporting Enabled

Not every Chrome user contributes to CrUX. The user must have sync enabled and have opted into sharing usage statistics. This further reduces the sample.

The 75th Percentile Threshold

Google evaluates CWV at the 75th percentile of real user experiences. Not the average. Not the median. The 75th percentile. This means 25% of your users can have terrible experiences and you still pass. But it also means a small percentage of slow experiences can drag you below the threshold.

Mobile and Desktop Are Evaluated Separately

A site can pass CWV on desktop and fail on mobile. Google evaluates them independently. Since most sites have worse performance on mobile and most searches happen on mobile, CWV ranking impact tends to be larger on mobile.

Pages Without Enough Data Use Origin Level Scores

If a specific URL does not have sufficient CrUX data, Google falls back to the entire origin's (domain's) CWV scores. This means poor performance on high traffic pages can drag down rankings for low traffic pages across your entire site.

The Three Metrics and Their SEO Weight

Three metrics make up Core Web Vitals as of March 2024. All three must pass. It is a pass/fail system. Two "good" and one "poor" means you fail.

LCP: Largest Contentful Paint

Threshold: Under 2.5 seconds.

LCP measures how long it takes for the largest visible element to render. Usually a hero image or a large heading. This is the metric with the strongest observed correlation to ranking position. The Advanced Web Ranking study of 3 million pages found a clear pattern: pages ranking in positions 1 through 3 had measurably lower LCP values than pages ranking in positions 8 through 10.

LCP is also the metric where I see the most failures. The most common anti pattern I find across audits: loading="lazy" on the LCP image. This hides the image from the browser's preload scanner and delays discovery by hundreds of milliseconds. I find this on roughly 4 out of 5 sites I audit.

For a complete guide to fixing LCP, see Largest Contentful Paint (LCP).

INP: Interaction to Next Paint

Threshold: Under 200 milliseconds.

INP replaced First Input Delay (FID) on March 12, 2024. This matters for SEO because every correlation study published before that date used FID. FID was easy to pass. Over 95% of sites passed FID. INP is harder. According to the 2024 Web Almanac, only 74% of mobile origins pass INP.

INP measures the latency of all interactions during a page visit, not just the first one. Heavy JavaScript, long main thread tasks, and poorly structured event handlers cause INP failures. Fixing INP usually requires architectural changes to how JavaScript executes. It is the hardest metric to fix.

No published study has measured the correlation between INP scores and ranking position. Every existing study used FID. This is the single biggest gap in the CWV research landscape.

For a complete guide to fixing INP, see Interaction to Next Paint (INP).

CLS: Cumulative Layout Shift

Threshold: Under 0.1.

CLS measures unexpected visual movement during the page lifecycle. Images without dimensions, dynamically injected ads, web fonts causing text reflow. This is usually the easiest metric to fix.

A study of 7 million web sessions by Agency FIFTY3 found a moderate negative correlation between CLS and engagement rate (r = -0.470). Pages with lower CLS had higher engagement. This makes intuitive sense. Users who see the page jump around tend to leave. And engagement metrics feed into Google's ranking signals independently of CWV.

For a complete guide to fixing CLS, see Cumulative Layout Shift (CLS).

What the Data Shows: Studies and Real Results

The Correlation Studies

Every major study measuring CWV's ranking impact was conducted between 2021 and 2022 using FID as the interactivity metric. FID no longer exists. Keep that context in mind.

Perficient / Eric Enge (December 2021): Compared ranking correlation before and after the Page Experience rollout. Found CWV was "not a large ranking factor." A correlation between better CWV and higher rankings existed, but it did not change meaningfully after the Page Experience update launched. Conclusion: sites with good CWV also tend to have good engineering overall. Causation is hard to separate from correlation.

Advanced Web Ranking (2022): Analyzed 3 million pages. Found LCP showed the clearest visual trend with ranking position. But also found that many pages ranking in the top 10 had no CrUX data at all. Meaning Google ranked them on other signals entirely.

Search Engine Land (2025): Analyzed 107,352 pages for AI search visibility. Found CWV acts as a constraint for AI visibility, not a growth lever. Weak negative correlation between LCP and search presence (r = -0.12 to -0.18). Their conclusion: CWV compliance works as a penalty avoidance mechanism. Not a competitive advantage.

The pattern across all studies is consistent. CWV is a lightweight signal. Severe failures hurt. Being "good" removes the penalty. Going from "good" to "excellent" produces no measurable ranking benefit.

Case Studies Where CWV Moved the Needle

The case studies that show ranking improvements from CWV fixes share a common trait: the sites had severe failures before the fix.

Vodafone Italy: Improved LCP by 31%. Saw an 8% increase in sales. When a high traffic ecommerce site goes from failing to passing LCP, the compounding effect of slightly better rankings plus better user engagement produces measurable business results.

Redbus (India): Reduced CLS from 1.65 to 0. Mobile conversion increased 80% to 100%. Domain ranking in Colombia improved 192%. A CLS score of 1.65 is catastrophic. Fixing that removes a massive penalty.

SiteCare (nonprofit): Moved all URLs from "Poor" to "Good" CWV. Gained 35,000 additional impressions per month and 7,000 additional sessions. Again: from failing to passing.

CoinStats: Fixed Base64 encoded images causing slow LCP. 300% increase in URLs passing CWV. 300% increase in search impressions.

The lesson in every case study is the same. The gains came from fixing severe failures. Not from incremental improvements. Not from chasing Lighthouse scores.

What I See Across CoreDash Monitored Sites

Across the sites I monitor with CoreDash, the pattern holds. When I fix CWV from "Poor" to "Good" on a site with otherwise solid content and links, I typically see modest ranking improvements over the following 4 to 8 weeks. When I improve CWV from "Needs Improvement" to "Good," the ranking effect is smaller and sometimes invisible. When I push a site from a "Good" LCP of 2.4 seconds down to 1.2 seconds, I see zero ranking change.

The threshold is what matters for SEO. Not the score.

What Does NOT Affect Your SEO

This section exists because I spend too much time in client meetings correcting misconceptions.

Lighthouse Scores Are Completely Ignored by Google for Ranking

I need to be direct about this. Google does not look at your Lighthouse score. At all. For any purpose related to ranking.

Malte Ubl, who shipped the Page Experience ranking rollout while he was Director of Search at Google, stated explicitly: Google does not consider your Lighthouse score in any way for search ranking. He now works at Vercel, so he can be candid about this.

Lighthouse runs a lab simulation on a single device with a throttled connection. It produces a number between 0 and 100. That number has zero relationship to how Google ranks your pages.

PageSpeed Insights Scores Are Not Ranking Factors

The big number at the top of PageSpeed Insights is a Lighthouse lab score. It is not what Google uses for ranking. The field data section below that number shows CrUX data. That is what Google uses. Most SEOs look at the big number and ignore the field data section. They are looking at exactly the wrong thing.

Going From "Good" to "Perfect" Does Nothing for SEO

Mueller said it clearly: "Getting those last few percent can be a ton of work... your site's SEO generally won't change because of that." And: "A perfect score is a fun technical challenge, and you'll learn something along the way... but it's not going to make your site's rankings jump up."

I love Lighthouse. I use it every day. It is an excellent diagnostic tool. But when a client asks me to get their Lighthouse score to 100 for SEO purposes, I tell them they are spending their budget on the wrong problem. Get to "good" in the field data. Then spend the remaining budget on content and links.

How to Check If CWV Is Hurting Your Rankings

Here is exactly what I do when a client asks if Core Web Vitals is causing their ranking problems.

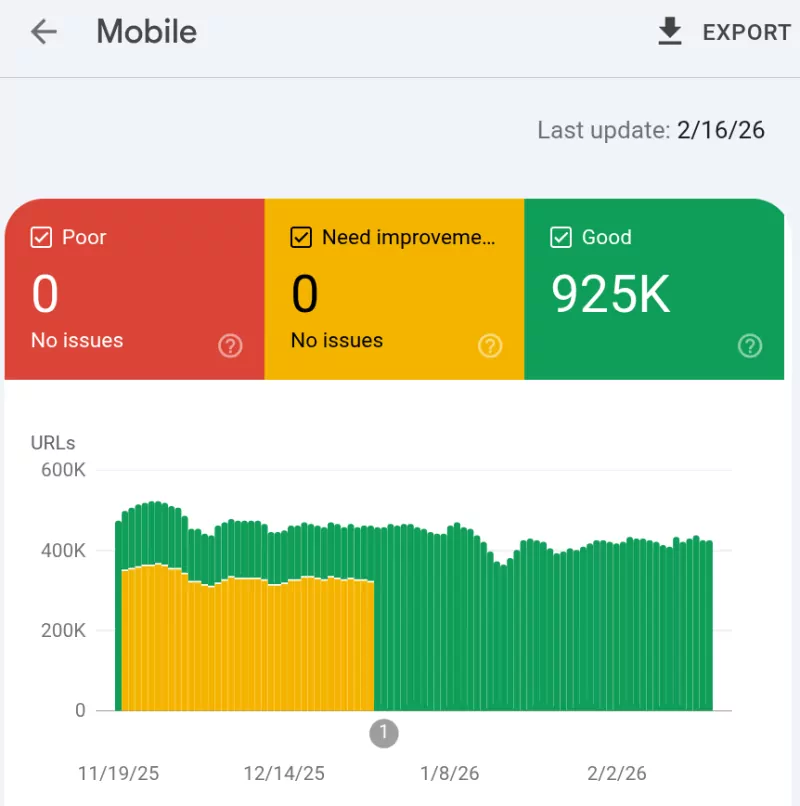

Step 1: Open Google Search Console. Go to the Core Web Vitals report. If all URLs show "Good" for both mobile and desktop, CWV is not your problem. Stop investigating CWV and look at content, links, or technical SEO issues instead.

Step 2: If URLs show "Poor" or "Needs Improvement," identify which specific URLs and which specific metric fails. CWV failures are always metric specific. You do not have a "CWV problem." You have an LCP problem, or an INP problem, or a CLS problem.

Step 3: Check CrUX data for the failing pages. Use the CrUX Dashboard or PageSpeed Insights (the field data section, not the Lighthouse score). Confirm the failure exists in field data, not just in lab tests.

Step 4: Deploy RUM monitoring. CrUX tells you what fails. RUM tells you why. This is why I built CoreDash. I needed page level attribution data that breaks down exactly which element caused the LCP delay, which interaction caused the INP failure, which DOM change caused the CLS. Without attribution data, you are guessing.

Step 5: Fix the specific issues. Each metric requires different fixes. I cover these in detail on the dedicated pages for LCP, INP, and CLS.

Step 6: Wait. The 28 day CrUX window means changes take roughly a month to reflect in Google's data. Check Search Console again after 4 to 6 weeks. Compare impressions and average position for the affected URLs before and after the CWV improvement.

Fix CWV for SEO: Where to Start

If your CWV data shows failures, here is how I prioritize fixes for maximum SEO impact.

Priority 1: Fix any "Poor" metric to "Good." The ranking signal lives at the threshold. Moving from "Poor" to "Good" is where the SEO benefit exists. This is the only thing that matters for ranking purposes.

Priority 2: Fix LCP first. It has the strongest observed correlation with ranking position and it is where most sites fail. The most common problems I fix in order of frequency: hero images with loading="lazy", render blocking CSS and JavaScript in the <head>, uncompressed or improperly sized images, slow server response times (TTFB above 800ms), and late discovered LCP resources that the preload scanner cannot find.

Priority 3: Fix CLS second. CLS has the strongest correlation with user engagement, which independently affects rankings through behavioral signals. Common fixes: add explicit width and height attributes to all images and videos, use aspect-ratio in CSS for responsive containers, preload custom fonts and set font-display: swap, and reserve space for dynamically injected content like ads.

Priority 4: Fix INP last. INP is the hardest to fix because it usually requires JavaScript architecture changes. Common fixes: break long tasks with scheduler.yield(), defer non essential JavaScript, reduce third party script execution, and move heavy computation off the main thread.

For detailed technical guides on each metric, see the dedicated pages in this cluster:

- Largest Contentful Paint (LCP)

- Interaction to Next Paint (INP)

- Cumulative Layout Shift (CLS)

- How to Pass Core Web Vitals

FAQ: Core Web Vitals and SEO Questions Answered

How much do Core Web Vitals affect rankings?

A small but confirmed amount. Google calls CWV "not giant factors." The data shows CWV works as a gate. Failing hurts. Passing removes the penalty. Going beyond "good" produces no measurable ranking benefit.

Does my Lighthouse score affect SEO?

No. Google uses CrUX field data from real Chrome users. Lighthouse is a lab simulation. The score it produces is not used by Google's ranking systems in any way.

Does my PageSpeed Insights score affect SEO?

The overall score (0 to 100) does not. That is a Lighthouse lab score. The field data section of PageSpeed Insights shows CrUX data, which is what Google uses. Look at the field data. Ignore the score.

How long does it take for CWV improvements to affect rankings?

CrUX data uses a 28 day sliding window. After fixing a CWV issue, expect 4 to 6 weeks before you see the change reflected in Search Console data. Ranking changes (if any) appear after that.

Are Core Web Vitals more important than content quality?

No. Content relevance, quality, and backlinks are far more important ranking factors. CWV is a secondary signal. Fix severe CWV failures, then focus your budget on content and links.

What replaced FID in Core Web Vitals?

Interaction to Next Paint (INP) replaced First Input Delay (FID) on March 12, 2024. INP measures the latency of all user interactions during a visit, not just the first one. It is significantly harder to pass than FID was. See our complete INP guide.

Do Core Web Vitals affect rankings on mobile and desktop equally?

Google evaluates mobile and desktop separately. A site can pass on desktop and fail on mobile, or vice versa. Since most sites have worse performance on mobile and most searches happen on mobile, CWV ranking impact tends to be larger on mobile.

Can poor Core Web Vitals hurt my rankings even with great content?

In theory, yes. In practice, the impact is small. Mueller has said you are unlikely to see a big ranking drop from CWV issues alone. But combined with other weaknesses, poor CWV adds a negative signal to an already struggling page.

Does Core Web Vitals affect AI Search and Google AI Overviews?

Early data from a 2025 analysis of 107,000 pages suggests CWV acts as a constraint for AI search visibility, similar to regular search. Pages with severe CWV failures appeared less frequently in AI Overviews. But the correlation is weak and more research is needed.

Should I fix Core Web Vitals or invest in content?

Both. But if you have to choose, content wins. Get CWV to "good" as quickly as possible. Then redirect your budget to creating content that answers real user questions better than your competitors. That will always have a larger ranking impact than pushing CWV from "good" to "excellent."

I make sites pass Core Web Vitals.

500K+ pages for major European publishers and e-commerce platforms. I write the fixes and verify them with field data.

How I work